How to Fix “Excluded by Noindex Tag” in GSC for Better Indexing

- accuindexcheck

- 0

- Posted on

If you’ve been wondering why some pages refuse to show up in Google search engines despite being optimized and published, it’s probably not Google’s doing. The site itself might be cues through indexing directions to the search engines: “Ignore this page.” That is what goes on when a particular page gets an “Excluded by noindex tag” in GSC. And no, it’s not always intentional on people’s parts.

This guide will tell you how to assess the situation causing the noindex status, how to get rid of it safely, and what you need to do to ensure that your important pages are open to indexing. We will look into the actual ways to deal with it and prevent it in the future in cases of a plugin setting, theme, or manual tag.

What Does it Mean By Excluded by Noindex Tag in GSC?

If the status of any URL in the Google Search Console is shown as “Excluded by ‘noindex’ tag,” it means that Google found the site, usually through your sitemap or internal links, or even through backlinks, but was told not to index it. This instruction is generally issued by means of a meta tag such as or perhaps an HTTP header with a noindex directive. So, Googlebot crawls the page but never places it within its search index; this means it will never come up as a searching result.

Sometimes it surely is a purposeful noindexing (like on a thank you page or admin URLs). Most of the time, though, it is a misuse or an accident, sometimes due to theme settings, SEO plugins, or developer configurations being set up incorrectly. What if the noindex tag was on pages that you want to be indexed by the search engines? You would want to fix that immediately.

Why Does the “Excluded by Noindex Tag” Issue Occur?

It is crucial to decipher the “Excluded by noindex tag” message in Google Search Console when troubleshooting it. Here are the most common causes explained in detail:

1. SEO Plugin Settings

SEO plugins such as Yoast, Rank Math, or All in One SEO may allow setting indexing settings straight from WordPress dashboard. This can prove to be too handy, and that can get dangerous sometimes. For instance, if a post or page is “noindexed” using a handy checkbox under plugin settings, then Google will be prevented from appearing at search results for them.

This often happens when:

- Authors copy settings from another page.

- Bulk edits are carried out without reviewing individual page settings.

- Without being aware of it, the default setting for some post types is set to noindex.

2. Theme or Template Defaults

There are some WordPress themes and page templates that are designed to append a noindex tag to specific sections—particularly archives, category pages, search results, or author pages—using the code. Although this is often implemented for SEO reasons (to avoid thin or duplicate content), it may actually serve to block some valuable content.

If anything concerning the previously mentioned type of theme with SEO options or custom templates, the following cannot happen:

- Add noindex tags without showing them in the visual editor.

- Apply site-wide settings that affect multiple pages at once.

- Conflict with plugin settings, leading to unexpected behavior.

3. Developement Oversight

During development or staging, developers add noindex tags to prevent test content from being indexed by Google. Smart move during the build phase—but that’s when the problem arises, if those noindex tags are not removed while going live.

Common developer mistakes include:

- Meta tags were left un-updated after launch.

- Leaving server level HTTP headers set to noindex.

- Duplicate noindex templates for important content.

4. HTTP Header Rules

Unlike meta tags that are set into the page source, HTTP headers, being server outputs, are more difficult to track unless a special tool is used. These headers could contain directives such as X-Robots-Tag: noindex that tell the search engines not to index the page-even though everything seems fine on the HTML source.

This often occurs when:

- Depending on the environment you may put noindex in .htaccess/.conf or at the CDN.

- Security or performance enhancement tools impose certain blocking considerations by default.

- The hosting environment usually reuses server rules for different projects as a method of optimization.

This can be done by using either curl, Screaming Frog, or AccuIndex.

5. Duplicate or Thin Content

Google recommends setting the noindex tag on pages that have little or no unique value. For instance:

- Product Listings Display from Searches

- In-Site Search Result Page

- Tag or Archive Pages auto-generated by system

Some SEO plugins and CMS platforms will automatically apply noindex tags on pages that are deemed to be below par in quality or quantity of content. While this may be helpful, stubborn detection logic would block pages that would otherwise offer value.

6. Robots Meta Tag Misuse

The tag is a direct method of saying to Google to exclude a page. When manually inserted into templates and site-wide code, this might:

- It has the chance of inadvertently touching several pages.

- If applied programmatically, it could be very difficult to track.

- If overlooked during updates or migrations, it may very well fly away from notice.

If, for instance, a developer inserts this tag into a general template file (say header.php), some pages using this template may inherit the noindex tag by default, even those pages that are meant to rank.

To counter that, always check the global meta settings in your theme files but avoid hard-coding noindex directives unless absolutely necessary.

How to Fix “Excluded by Noindex Tag” – Step-by-Step

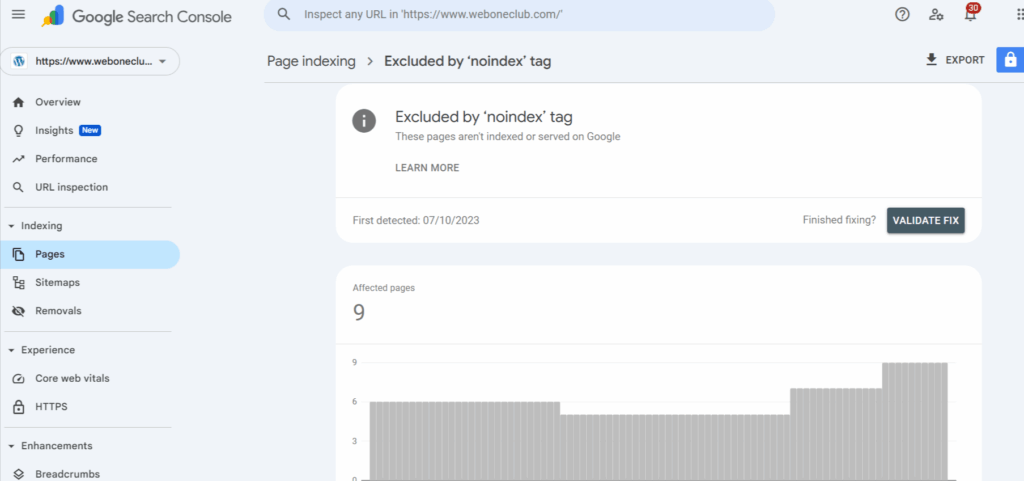

Step 1: Confirm the Issue in Google Search Console

Start by going into Google Search Console (GSC) and selecting your website property.

Navigate:

- Indexing > Pages > Excluded

- Look for the status: “Excluded by ‘noindex’ tag.”

These are URLs discovered by Google but left unindexed because noindex blocked them. Click on any URL and use the Inspect URL feature. GSC will show whether or not the page is eligible to be indexed at the moment and from where the noindex tag is being triggered – meta tag or HTTP header.

Step 2: Check the Page Source Code or Meta Tags

For the problematic URL, go ahead and open it directly using your browser. If what you need was cached, use the cache. Right-click and select “View Page Source” from the context menu, or press Ctrl+U if you’re on Windows, and Cmd-Option-U on a Mac to bring up the source panel.

Search for:

or

If present, this meta tag will tell search engines not to index the page. If there was no such meta tag, the header could enumerate one, and you need to check for it.

Step 3: Check SEO Plugin or Theme Settings

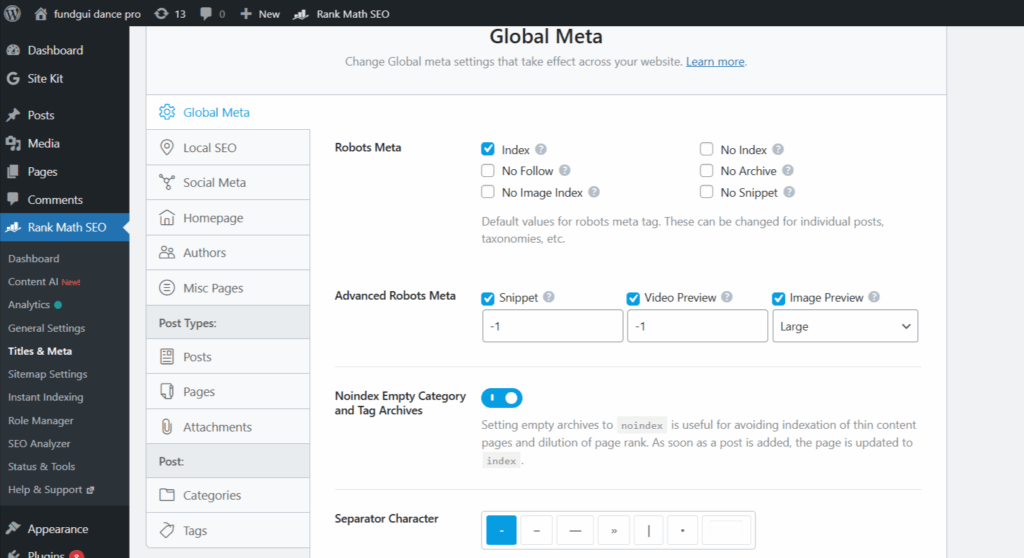

Suppose you are using a CMS (WordPress or any other), then it may better serve you to make sure that your meta robots tag(s) can be set through a plugin, preferably by Yoast SEO, Rank Math, or All in One SEO.

In WordPress, this is the path you take:

- Go into the editor of the page or post.

- Scroll down below till you find the SEO section.

- Then look for options that say:

- Do you want search engines to display this page in search results?

- Or some checkbox that says noindex this page

Uncheck it or set it so it’s allowed to be indexed. Then, go back to the plugin settings and verify if:

- Whole post types (like pages, categories, tags) have been set to noindex.

- Maybe whatever archives, author pages, or attachments meant to be blocked got blocked by accident.

Also, themes can impose noindex tags. Look through your theme’s SEO panel or settings, if there is one. If not, and if custom templates are being used, then look at the head

Step 4: Remove the Noindex Tag from Important Pages

After tracking the noindex directive’s origins, whether it’s a plugin, theme, or code originating from somewhere, it is to be considered for removal.

- When it comes to plugins, set the option to allow indexing.

- If coding is involved, then the meta robots tag must be removed. That is, it should be something like:

<meta name="robots" content="index, follow">- Regarding HTTP headers, inspect that the server (.htaccess, NGINX, CDN configuration) does not impose X-Robots-Tag: noindex.

Step 5: Request Indexing at Google Search Console

Once the tag or attribute noindex is removed, get back to the GSC homepage and inspect the URL once again. Paste the corrected URL and press Enter.

In case the URL is configured correctly, the system would return the following message to the user:

URL is available to Google.

After that, click the “Request Indexing” button to ask Google to crawl the page again and possibly index it. This is the step where you might be able to speed up indexing-when it goes on for hours, this may reduce it to days.

Step 6: Monitor Coverage Status for Changes

While the URL is being submitted, it is advised to monitor the Coverage report within GSC for the next few days to ensure the page has status from Excluded to either Valid or Indexed.

Also:

- Have calendar reminders set so as to run indexing audits each month.

- Monitor indexing changes with the assistance of tools like Ahrefs, Semrush, Screaming Frog, or AccuIndex.

- Maintain a record of every page you have worked on, and reindexed for occasions when it might be needed in the future.

Best Practices to Avoid Future Noindex Issues

After fixing the “Excluded by noindex tag” errors, the next step is to prevent them from recurring. Unintentional noindex tags can silently undermine your traffic, even on high-stake pages. The best way is to remain proactive in your SEO settings and website maintenance.

1. Set Default Indexing Behavior in SEO Plugins

Most of the modern SEO plugins like Yoast, Rank Math, or All-in-One SEO permit global settings to determine the default meta robots tags of certain content types (pages, posts, categories, and tags) for indexing.

- The default settings for Posts, Pages, Products, or other equally important content types should be set to “index.”

- Check the settings for Media attachments, Archives, or Author pages and change them according to whether the content adds any value.

- Be sure to review the plugin’s configuration after any major updates or content migration processes.

2. Audit Your Robots Meta Settings Regularly

Robots meta tags give order to search engines to treat each web page differently. Over time, new noindex tags may be introduced because of themes, plugins, or manual changes done.

- Quarterly would be a great timeframe for crawling your website through tools like Screaming Frog, Sitebulb, or AccuIndex to detect any noindex tags.

- Export the whole list of pages from your server; you shall be aware of which ones carry a noindex tag on them.

- Make sure that only those pages considered for exclusion are marked as noindex.

3. Use Staging Environments Correctly

Noindex tags are usually applied in staging or dev environments to prevent indexing of test pages. This practice is fine if and only if the tags are removed before deployment.

- Once live, ensure that the website will not have the noindex tag left behind from the staging version.

- You should always use a unique environment URL or subdomain for staging (staging.example.com, for instance) and robot.txt block that. This is far better than using noindex.

- Recheck settings for the robots meta tag as a final launch checklist.

4. Keep a List of Pages That Should Remain Noindexed (and Why)

Not all noindex tags are bad. In fact, some pages should never appear in search results—like:

- Admin or login pages

- Thank-you or confirmation pages

- Internal search results

- Low-value filters or duplicate category views

Keep a documented list of these intentionally excluded URLs, including the reason for noindexing. This way the team can avoid accidental indexing in the future, and you will have a clear reference during audits.

5. Run Monthly Indexing Health Checks

Indexing issues often go unnoticed until traffic drops. By running regular checks, you can catch noindex problems early—before they affect rankings.

- Identify indexing shifts using tools like Google Search Console, AccuIndex, or Ahrefs Site Audit.

- Compare earlier reports to spot sudden shifts in indexed versus excluded pages.

- Focus exclusively on newly created content, top-performing pages, and recent updates.

FAQs

Is an “Excluded by Noindex Tag” Actually Bad for SEO?

It purely depends on the situation. It may be fine when the noindex tag is an intentional policy placed on pages with little value or duplicates. It may be, however, bad SEO to exclude important pages from the site if it has been done inadvertently.

How Can I Remove the Noindex Tag From a Page?

Doing this requires searching for the meta tag manually in the meta tags of the page and changing noindex into index; or you can let your SEO plugin do these changes for you. Two popular SEO plugins that can do this for you are Yoast and Rank Math. After the change, you need to request out that page’s re-indexing from the GSC.

How long does it take for Google to index my page after I remove the noindex?

A few hours to a few days once the tag is gone and indexing is requested through GSC for the page to get recrawled and indexed by Google.

Can plugins automatically add a noindex tag?

Some SEO plugins can mark the pages as noindex depending on default settings, detection of duplicate content, or a custom rule. Always check configurations of plugins.

What tools can show me noindex issues?

Google Search Console, Screaming Frog, and can AccuIndex, as well as Ahrefs or SEMrush, crawl your site and show pages with a noindex directive.

Should category and tag pages be indexed or noindexed?

According to your content strategy, these pages can be indexed if they provide valuable, unique content. Otherwise, if it’s thin or duplicate content, keeping it noindexed is a better idea.

In Conclusion

The “Excluded by Noindex tag” issue in Google Search Console can silently prevent valuable pages from appearing in search results. While the fix tends to be direct, overlooking the solution means missed traffic and SEO performance. Fast action can be taken to solve the problem if your investigation has led you to the cause, whether it lies in a plugin setting, theme default, or something a developer has done. More importantly, having a procedure for systematically monitoring what is indexed will ensure that their recurrence is prevented.