Index Bloating Issues: How They Affect SEO and How to Fix It

- accuindexcheck

- 0

- Posted on

Many website owners and SEO experts put all their energies into producing new content and optimizing it and yet find their rankings below what they expect. Index bloating, which is a technical issue, could actually be one of the prime reasons behind this.

Index bloating is when search engines end up indexing way too many useless or low-quality pages on a site-opposite content, thin content, dated archives, or parameter-based URLs. Thus arise two problems: one, it wastes the crawl budget, so the search engine finds it difficult to get to and prioritize the valuable content; and two, pushing the high-quality pages down in search results.

Ignoring index bloating can be damaging for both your ranking and user experience. On the plus side, it can be fixed by suitable site audits, better content management, and proper indexing. This article will tell you what index bloating is, how it affects SEO performance, and give you some steps that will eventually fix it for long-term ranking gains.

What is Index Bloating?

Index bloating consists of search engines (e.g., Google) indexing more URLs from your site than are actually useful or intended. Instead of focusing on your core pages-key important categories, core products or services, articles, and landing pages-the index gets filled with low-value URLs and duplicates. Such indexing wastes crawl budget and clutters the reports. The report cluttering supports your most important content in acquisitions. In practical terms, index bloating denotes a mismatch between what should be indexed (strategic, unique, valuable pages) and what is indexed (redundant, thin, or auto-generated pages).

How It Happens on Websites

InIndex bloating is almost always a byproduct of technical and content-management decisions quietly generating extra URLs. Common patterns include:

1. Duplicate Page Versions

Sometimes the same site exists in a couple of versions:

http://example.com and https://example.com

www.example.com/page versus example.com/page

In some cases, a single page is accessible through multiple URL formats, such as with or without a trailing slash (e.g., /page vs /page/).

If such duplicates do not bear canonical tags or are not 301ed, the search engines see them as different pages, cluttering their index and dividing the authority from the main URL.

2. Parameter & Filtered URLs

Setting URL parameters for sorting, filtering, or tracking is a common practice, especially with eCommerce sites:

example.com/shoes?color=red&size=8

While these parameters may be useful, they each create a new URL for what is largely the same content. If indexed, they quickly create a bonanza of useless pages that balloon your index and divide ranking signals.

3. Thin or Low-Value Pages

Pages with very little unique content — empty category pages, single-post tag pages to name a few, or content placeholders — thus have no real use for the searchers. If these get indexed, they take crawl budget and bury more relevant pages in search results.

4. Auto-Generated Search Pages

Some sites allow internal search result pages (such as example.com/search?q=shoes) to be indexed by search engines. These pages are usually cluttered with repetitive, dynamically generated content that serves no useful purpose to external search engines. If these are left unchecked, they quickly multiply and crowd the index.

5. Staging or Test Site Exposure

This will allow search engines to crawl through staging or development sites and index them together with your live site. This causes duplication in listings and confuses crawlers, wasting indexing resources over pages that are not yet in production.

How Index Bloating Impacts SEO and Crawl Budget

Index bloating hurts both the crawl frequency and ranking opportunity of a website’s best pages. The process and reasoning behind why this occurs matter greatly.

1. Misallocation of Crawl Budget

Search engines assign a certain crawl budget to a site—the general number of URLs they will attempt to crawl during a specific time period. When your site has too many URLs with little or no value or duplicate URL versions, much of this crawl budget gets wasted on pointless crawling of low-value pages. So, fewer resources get allocated to discovering, updating, or re-indexing valuable ones, which in turn detracts far from your site’s SEO accrual.

2. Late Indexing of Important Pages

When crawlers go around wasting time on unimportant URLs, newly created or updated pages run a delay in being entered in the index. This sort of mishap can prove detrimental to take-sensitive content, which in the least would not surface among search results. This tardiness over time would hamper the competitiveness of your website in search rankings.

3. Dilution of Ranking Signals

Index bloat creates an environment where too many similar pages compete against each other for the same set of keywords with the backing of opposing SEO factors. Backlinks, user engagement, and other ranking signals fail to coalesce into one authoritative page, instead splitting across several URLs. As a result, primary pages may lose their ability to rank well and become less visible in search results.

4. Poor User Experience

Chancy old, irrelevant, or duplicate pages in search results give users the wrong impression and cause a high bounce rate. A cluttered index is more challenging for site navigation, frustrating users, and eroding trust in the brand.

How to Identify Index Bloating on Your Website

Index bloating gradually weakens your site’s SEO by allowing low-value or unnecessary pages to occupy space in Google’s index. Recognizing index bloating early is the first step in keeping your crawl budget healthy and your rankings safe.

Using Google Search Console (Coverage & Indexing Reports)

Google Search Console is among the easiest tools to use in detecting index bloating. Just by viewing the page Coverage and Indexing reports, you can tell exactly how many URLs Google has indexed compared to the actual relevant pages your site should have. If hundreds or thousands of extraneous URLs not part of your core content are found indexed, it’s a strong indication that index bloat is happening. It could be tag pages, duplicates, or expired URLs crawling that are absolutely unnecessary.

Performing a site:domain.com Search

Google “site:yourdomain.com,” and one actually can find out how many pages are indexed by Google at a given time. Compare this number with your sitemap’s actual count or the number of contents you possess on your site. Should the site have only 200 main pages while Google returns over 800 results, indexing trouble should be suspected. This method lets one get a very short overview to help figure out any weird or irrelevant pages that might be showing up in the search results.

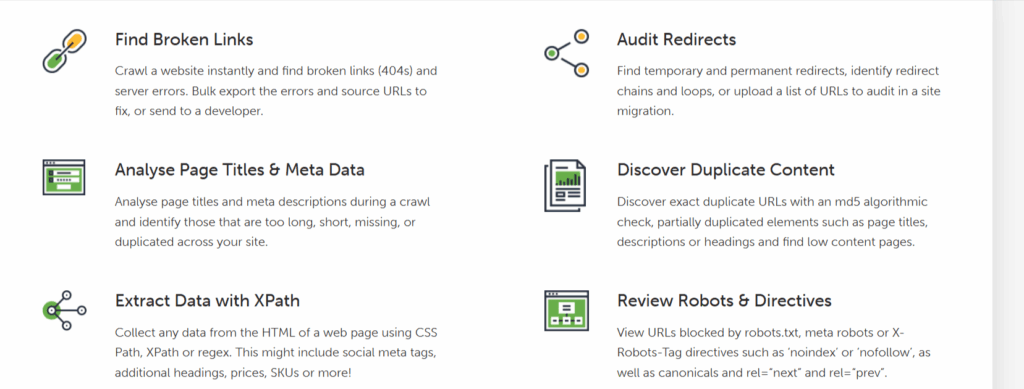

Analyzing Crawl Data with SEO Tools

The advanced tools like Screaming Frog, Sitebulb, and Ahrefs crawl your site thoroughly and compare the URLs crawled versus those indexed by Google; therefore, the tools help to find duplicates, duplicate archives, or parameter-based URLs that enlarge the index. These provide tools for crawling data analysis and let you drill down on which sections or types of pages might be needlessly bloating your index size and to check indexing in bulk you can check it on Accu Index Check.

SEO Fixes for Index Bloating Issues

Bloating the index means crawling will be slowed down and some ranking potential will be lost. By performing these fixes, we will keep the index lean and only allow search engines to crawl valuable high-quality pages.

1. Remove or Noindex Low-Value Pages

Identify pages that provide almost no SEO benefits or user value: thin content, test pages, expired promotions. Then either remove them outright or block their indexing by search engines.

- If you want to get a page crawled but never get it indexed, use a meta tag like <meta name=”robots” content=”noindex,follow”>.

- If pages need to be really gone, put in 410, otherwise redirecting it away with a 301 to a valid alternative is the way.

- Keep running analytics reports and GSC reports to highlight candidates for a removal or noindex-from list: pages with low traffic and high bounce rate.

2. Consolidate Duplicate Pages (Canonical Tags & Redirects)

There could be more than a couple of URLs indexing the same content; the signals tend to be split between these URLs in different ways, so they should be merged for the benefit of one URL.

- In duplicity of content, use the head of the original page to specify the canonical: for example:

- <link rel=”canonical” href=”https://example.com/preferred-url/”>

- In case the duplicates are still unwanted, then put them away with a 301 redirect: HTTP to HTTPS, non-www to www, missing slash to presence of slash, etc.

- Always link to the canonical URLs for internal linking; don’t create canonical chains or loops.

3. Block Unnecessary URLs via robots.txt (and X-Robots-Tag)

Stop crawlers from blowing requests on admin pages, internal search results, staging areas, or various parameterized paths with zero indexable value.

- Create disallow rules in the robots.txt for folders such as /wp-admin/, /search/, or some internal preview paths.

- The X-Robots-Tag HTTP header supports noindexing for non-HTML files you want excluded (PDFs, images).

- Remembering now, a robots.txt can be utilized to block crawling but cannot stop indexing-they must be complemented with noindex, a redirect, or some other form of expunge.

4. Optimize CMS Settings to Avoid Auto-Generating Useless Pages

Many platforms automatically create several archives, tag pages, or faceted URLs; with your settings, the system should be stopped from creating indexable duplicates by default.

- Disable any unused archive (author, date, tag) types, or use your SEO plugin to set them to noindex.

- Make sure session IDs, tracking parameters, or ad hoc query strings for the canonical URL are never canonicalized.

- Ensure faceted navigation filters do not create pages for indexing (use noindex, canonical, or block as needed).

5. Use Pagination and Filters Correctly

Bad pagination and unchecked filter combinations lead to URL growth in abnormal amounts. Practise so only meaningful pages get indexed.

- Prepare a default canonical listing or a “view all” page for category content, while the paginated/filter results should be either no-indexed or canonicalized as the situation demands.

- Do not let infinite filter combinations be indexed; noindex,follow low-value facet pages and at the same time help Google by keeping the links crawlable.

- Keep the URL patterns clear and consistent for paginated pages (like /category/page/2); allow these crucial pages to appear in internal linking.

Best Practices to Prevent Index Bloating in the Future

Keeping your site’s index healthy does require some maintenance along with a disciplined process. So let’s proceed through applicable best practices to digest with a brief explanation plus quick wins for implementation on the spot.

Regular Site Audits

Irregular audits will cause bloats that sometimes go beyond control-such as duplicate content, parameter explosions, thin content, incorrect indexing-just to name a few, and these are issues that one has to deal with.

- Set up a crawl schedule based on monthly or quarterly needs on Screaming Frog, Sitebulb, or Ahrefs.

- Crawl results need to be compared with that of Google Search Console – Coverage-id to spot mismatches.

- Keep track of changes through time-indexed URL counts, large “Excluded” buckets, and so on-so that spotting any regression becomes easy.

Maintain a Clean Sitemap

An up-to-date XML sitemap tells search engine crawlers about those worthy pages to be indexed. Then, with this cleaning, those crawlers shall not waste their time trying to follow useless or out-of-date URLs.

- Put canonical, high-value pages in your sitemap and remove any expired or redirected URLs.

- The sitemap should update automatically based on the addition or removal of important pages and be resubmitted to GSC subsequent to major changes.

- Split gargantuan sitemaps into logical groups (e.g., posts, products) to ease monitoring.

Use Structured URL Patterns

Consistent and predictable URL structures reduce accidental duplication and allow for the application of global rules more easily (robots, redirects, canonicalization).

- Standardize URL format (https + preferred host, consistent with trailing slash or without trailing slash).

- Avoid using session IDs or unnecessary query strings as canonicals, as well as parameterized URLs generated automatically.

- Ensure that category, tag, and product URLs follow a clear hierarchy to prevent accidental branching.

Monitor Google Search Console Frequently

GSC remains the ultimate tool to uncovering Google’s view of your website. Routine checks allow one to catch indexing anomalies and ensure that fixes actually take effect.

- Check Coverage and Pages reports weekly or whenever major changes are applied to the site.

- When necessary, use URL Inspection for validating changes and requesting re-indexing of the fixed pages.

- Set up alerts or schedule exports for key GSC reports so that you’re informed instantly of any sudden index changes.

FAQs

How do you check index bloat?

You can check index bloat by comparing the number of indexed pages according to Google Search Console with the actual number of meaningful pages on the site. Using a crawling tool like ScreamingFrog would help in spotting low-value or duplicate URLs.

What is index and noindex in SEO?

“Index” instructs search engines to place a page in search results, while “noindex” instructs otherwise. It is these tags that prevent a page from being found through search engines or facilitate its discovery.

How do you stop a page from being indexed?

A noindex tag can be added into the page’s HTML head or configured through your CMS interface, or indexation could be blocked through HTTP headers. Removing the page from the sitemap is another alternative.

Can I turn off indexing?

Yes, you certainly can. For instance, one could place noindex tags on them, block their URLs via robots.txt file, or password protect the pages so search engines are unable to get to them.

In Conclusion

Index bloating quietly works against SEO by wasting crawl budget and starving your best pages from visibility in search engines. Audits on a regular basis, cleanup efforts targeted to bloat (noindex/remove/redirect), URL and sitemap management on a consistent basis, and monitoring in Google Search Console keep bloat at bay so that crawlers can work efficiently. Treat index hygiene as ongoing maintenance, rather than a one-time fix, and you’ll increase the crawl speed for indexed pages, save ranking signals, and preserve search traffic:: that is, traffic that brings search visitors to your important pages.